Physical History

Fast Entropy and the eth law

By Mark Ciotola

First published on May 17, 2019. Last updated on January 19, 2021.

“The question is not whether nature abhors a vacuum, but how much nature abhors it.”

Introduction

Here we introduce the ethLaw of Thermodynamics, or more descriptively as Fast Entropy. (Here, “e” represents the transcendental number e, which is about 2.718. The number efits nicely between Laws 2 and 3 of Thermodynamics and expresses the importance of the eth Law in numerous cases of exponential growth.)

Physics is a relatively “pure” subject. Physics is not as pure as mathematics. However, the motions and behaviors of subatomic particles exhibit a beauty and perfection reminiscent of the celestial spheres of the ancient Greeks. Newton’s Three Laws likewise brook no ambiguity, and describe a precise ballet of mechanical motion in the vacuum of the planetary heavens.

With this purity in mind, physicists tend to consider thermodynamic systems in terms of before and after a change. Thermodynamic processes themselves tend to me “messy”. The state of a system in terms of entropy, temperature and other quantities is compared before and after a change, such as heat flow or the performance of work. By doing so, it is possible to neglect the amount of time required for thermodynamic changes to take place. This works well in physics, and the First and Second Laws of Thermodynamics typically suffice.

However, much of the world is a mess (involving tremendous complexity and uncertainty) and frequently must be studied in less than ideal conditions. Further, in the fields of Physical History and Economics, time is of the essence. Utopian idealism aside, how long changes take can make all of the difference in societies. For example, people can’t wait forever to be fed and late armies will often lose wars.

The element of time mustbe introduced in order to apply thermodynamics to social science, which is the thrust of this entire book. This chapter will do so.

Fast Entropy As A Unifying Principle

Fast entropy can be used as a unifying principle among both the physical and social sciences. Fast entropy has application to applied and professional fields as well. A better name for fast entropy could be the “e” th Law of Thermodynamics.

The ethLaw of Thermodynamics states that an isolated system will tend to configure itself to maximize the rate of entropy production.[1]

Heat flow through a thermal conductor example

Most introductory physics textbooks do have an example concerning thermodynamics that involves time.[2]Picture a simple thermal conductor through which energy flows from a hot reservoir to a cold one. For this example, we will consider the term reservoirhere refers to a body whose temperature remains constant regardless of how much heat energy flows in or out of it. [3]

Heat flow through a thermal conductor. The magnitude of that flow is proportional to both the area of the conductor and as its thermal conductivity. More heat will flow through a broad conductor than a narrow one. Also, more heat will flow through a material with a high thermal conductivity such as aluminum than through one with low thermal conductivity such as wood. Heat flow is inverselyproportional to the conductor’s length. Thus, more heat will flow through a short conductor than a long one.

Heat flow is also proportional to the difference in the two temperatures that the thermal conductor bridges. This difference in temperatures has nothing to do with the conductor itself. A greater temperature difference will provide a greater heat flow across a given conductor, regardless of the characteristics of that conductor.

Equation for thermal energy flow through a conductor:

\(\frac{\Delta Q}{\Delta t} = k A \frac{\Delta T}{L} \).

where, Qis the flow of thermal energy, tis time, kis a constant dependent upon conductor material, Lis conductor length, and A is conductor area, and \(\Delta T\) is the temperature difference. This equation states how much heat will flow through a conductor, assuming the temperature difference remains constant. So once again, we face an example that is constant with respect to time, but it provides a reasonable starting point.

Electrical engineers will find this equation similar to a rearrangement of Ohms Law, where electric current is proportional to voltage divided by resistance:

\(I = \frac{V}{R}. \)

Recalling The Second Law of Thermodynamics

The Second Law of Thermodynamics states that the universe is moving towards greater entropy. Stated another way, the entropy of an isolated system shall tend to increase.[4]A corollary is that a system will approach a state of maximum entropy if given enough time. A system in a state of maximum entropy is analogous to a system in equilibrium.

However, neither law nor corollary describe the rate at which entropy shall be produced, nor how long it would take a system to produce maximum entropy.

The ethLaw—Fast Entropy

The author has proposed[5]that the Second Law can be extended by stating that not only will entropy tend to increase, but also it will tend to do so as quickly as possible.[6] (Others have made the same observation. e.g. A. Annila, R. Swenson). In other words, entropy increase will not happen in a lazy, casual way. Rather, entropy will increase in a relentless, vigorous manner. The author calls this extension the ethof Thermodynamics[7], or more descriptively, Fast Entropy. A more precise statement of the ethLaw is that “entropy increase shall tend to be subject to the principle of least time.” The ethLaw gives teeth to the Second Law. It will need those teeth in order to be useful for the social sciences.

Really, though, the ethLaw is already widely practiced astrophysicists and atmospheric scientists. Whether a stellar or planetary atmosphere tends to convect or radiate depends on which results in the greatest heat flow. The maximization of heat flow results in the maximization of entropy increase, so this scenario represents the ethLaw in action.

Fast Entropy can be used as a unifying principle among both the physical and social sciences. Fast entropy has applications to applied and professional fields as well.

More Precise Statement of ethLaw

The ethLaw needs to be stated more precisely to be of much use. A more precise statement is that “entropy increase shall tend to be subject to the Principle of Least Time.” The Principle of Least Time is a general principle in physics that applies to diverse areas such as mechanics and optics. Snell’s Law of Refraction is an example.

Physical Examples

Neither the ethLaw nor Fast Entropy will be found in a typical physics textbook, although it could said to fall under non-equilibrium thermodynamics or transport theory discussed in some texts. Fast Entropy involves an element of change over time that can involve challenging mathematics and measurements. Nevertheless, a few simple examples can be offered to support the validity of Fast Entropy.

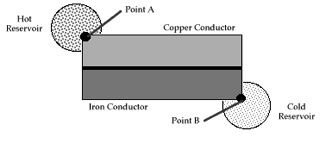

One example is heat flow through two parallel conductors each bridging the same two thermal reservoirs (see figure). No matter what area, materials or other characteristic comprise each of the conductors, the percentage of heat that flows through each conductor is always that which maximizes total heat flow. In this case, when total heat flow is maximized, so to is entropy production maximized.

Thermal conductors in parallel

Another example is heat flow through conductors in series between a warmer and cooler heat reservoir (see figures). This example replicates the classic demonstration the applicability of the Principle of Least Time in optics (Snell’s Law), but using thermal conductors in place of refractive material, and replacing the entrance point of light with a contact point with a warmer reservoir and the exit point of light with a contact point with a cooler reservoir.[9]

Thermal conductors in series

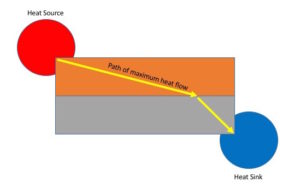

While heat flow tends be a nebulous affair, the path of maximum heat flow can nevertheless be ascertained. This can be accomplished by noting perpendicular paths to isotherms indicated by placing temperature sensitive color indicator film upon the conductors (below). The greatest color change gradient represents the path of maximum heat flow. Observations show that the path of maximum heat flow is consistent mathematically with Snell’s Law (which is based upon the principle of least time but usually reserved for light rays). This example is reasonably easy to replicate.

Idealized path of maximum heat flow through conductors in series

A third example is well known to atmospheric scientists. Here, in an atmosphere where heat is flowing from a warm planetary or stellar surface, whether thermal radiation or convection will occur tends to be dependent upon whichever produces the greatest heat flow. Whichever produces the greatest heat flow tends to produce the entropy most quickly.

A Heat Engine Begetting Heat Engines

The work done by heat engines can be used for human activities. Part of it can be used to maintain the heat engine. More significantly, part of the work can go to build additional heat engines. These additional heat engines can produce more work to produce even more heat engines. This idea is pictured here (see figure). The growth of heat engines is then exponential, at least until limiting factors come into play. This is a key point. Because heat engines can beget heat engines, an exponential increase in entropy can take place.

Heat engines begetting heat engines

Here, entropy production is proportional to the quantity of heat engines. Fast entropy favors exponential growth in entropy production, so fast entropy favors the “spontaneous” appearance and endurance of heat engines. Under the Second Law alone, the spontaneous appearance of a heat engine is possible, but improbable. Fast entropy then utilizes those improbable appearances to create self-sustaining, exponentially growing systems.

Emergence of Complex Dissipation Structure

When systems are far out of equilibrium, there is a tendency for complex structures to form to dissipate potential (Progogine, ___). Such a process is an example of fast entropy. The emergence of atmospheric convection structures are examples of complex dissipative structures. Convective structures tend to form where convection results in greater thermal energy transport from the surface of the Earth to its upper atmosphere than does simple radiation. Storm systems, tornadoes and hurricanes are further examples. The spiral arms of galaxies are similar in appearance to those of hurricanes. this is no coincidence, since the spiral structure of galaxies also result in greater production of entropy (see paper from Naval Observatory astrophysicist ____).

Rising cloud column (credit: NOAA)

Applicability of Fast Entropy to Life and Social Sciences

If Fast Entropy is a fundamental tendency in physics that especially applies to living organisms, life would have evolved to produce entropy in a manner consistent with the Principle of Least Time. Evolution is quite similar to statistical mechanics. It finds the answer it is seeking by rolling the dice an unimaginable amount of times. Statistical mechanics, including thermodynamics, operates most reliably upon systems of many components. Evolution likewise requires a sufficiently high population to operate upon. Endangered species are especially at risk, because their populations often become to small to support the evolution of that species, making it especially vulnerable to change. Evolution is whatever survives the “dice throwing” in response to environmental change. Successful mutations out survive non-mutants and other mutations to multiply and dominate their environment.

In thermodynamics, the Second Law statistically allows small regions of lower entropy. Most of these regions will quickly disappear due to the random motion of molecules. However, a rare few of these regions, by pure statistical chance, will be able to act as heat engines and will increase overall entropy (despite their own lower entropy). If these rare, entropy-creating regions can reproduce, then they will be favored by fast entropy, and will come to dominate their region. Certain chemical reactions are examples, and from chemistry comes life.

So then, life can be viewed as a literal express lane from lower to higher entropy. Although living organisms comprise regions of reduced entropy, they can only maintain themselves by producing entropy. Life has produced a diversity of organisms in order to maximize entropy production with respect to time. For example, if one drops a sandwich in a San Francisco park, a dog will rush by to bite off a big piece of the sandwich, then the large seagulls will tear away medium sized pieces to eat. Smaller birds will eat smaller pieces, and injects and bacteria will consume smaller pieces yet. If only one or two of those organisms existed, some of the pieces or certain sizes could not easily be consumed. If they couldn’t be consumed, they could not be used to increase entropy.

Humans are living organisms and do their part to contribute to maximizing entropy production with respect to time. In fact, the more complex, structured and technologically advanced human civilization becomes, the faster it creates entropy. It is true that cities and technology themselves represent regions of lower entropy, but only at the cost of increased overall entropy.

Further Applications

There are both physical and social applications for Fast Entropy.[8]Physically, Fast Entropy might be used to improve heat distribution and removal. Socially, Fast Entropy drives Hubbert Curves. Further, Fast Entropy might be used to determine key parameters of Hubbert curves and constraints upon them.

Fast Entropy analysis requires that some indication of entropy production with respect to time be determined. An exact determination might prove to be difficult, but comparisons of entropy production are easier. For example, if people consume a known mean number of calories, then the more people a regime has, the more entropy it produces. Most historic regimes have a sufficiently low level of technology that this type of analysis is quite practicable.

Conclusions and Future Research

Fast Entropy can be used in history as a criterion of success for a regime. Was a regime overtaken by another regime that was able to produce more entropy more quickly? In economics, Fast Entropy can be used to study the progress of a regime along its Hubbert curve, and infer factors such as efficiency, economic centralization and wealth distribution. Fast Entropy can be a power tool for the analysis of proposed social policy. However, an important issue to be investigated is whether and how the value of entropy production needs to be weighted with regards to its distance in time.

Notes & References

[1]However, the behavior of systems the atomic level can vary from that discussed in this chapter.

[2]One can infer the passage of time by multiplying the calculated heat flow by time. However, this is example is not really time dependent. The heat flow remains constant regardless of how much time passes in this idealized example. It is nevertheless a good approximation for many real situations.

[3]Heat flow is also proportional to the difference in two temperatures that the thermal conductor bridges. This difference has nothing to do with the conductors themselves. Heat flows through a thermal conductor in proportion to the area of the conductor as well as its thermal conductivity. More heat will flow through a broad conductor than a narrow one. Also, more heat will flow through a material with a high thermal conductivity such as aluminum than through a material with low thermal conductivity such as wood. Heat flow is inverselyproportional to the conductor’s length. More heat will flow through a shorter conductor than a long one. This is known as Fourier’s heat conduction law

[4]A more precise definition is that “any large system in equilibrium will be found in the macrostate with the greatest multiplicity (aside from fluctuations that are normally too small to measure).” D. Schroeder, An Introduction to Thermal Physics. San Francisco: Addison-Wesley, 2002.

[5]This proposed extension was anticipated in a talk given by the author to a COSETI conference (San Jose, CA, Jan. 2001, SPIE Vol. 4273), was presented at a talk entitled Hurting Towards Heat Death (Sept. 2002) and appeared in the Fall 2003 issue of the North American Technocrat. Subsequent to this proposal, the author has observed that a form of this extension is already in use by astrophysicists and meteorologists. When modeling atmospheres, their models will tend to choose the form of energy transfer that maximizes heat flow, such as convection versus conduction or radiation. See B. Carroll and D. Ostlie, An Introduction to Modern Astrophysics, 2ndEd., Pearson Addison-Wesley, 2007, p. 315.

[6]The Second and A Half Law is not well known and therefore is neither generally accepted nor rejected by most physicists. Although the Second and A Half Law is fairly consistent with standard physics, it is primarily intended for use in the applied physical sciences and the social sciences. There is some possibility that this proposed law is flawed. However, it has somemerit and is somewhat better than what we have without it.

[7]As stated above, e in ethlaw referring to the transcendental number e, that is 2.718.

[8]Psychologist and musician Rod Swenson had proposed some elements of this, perhaps as early as 1989. He suggested that a law of maximum entropy production could apply to economic phenomena.

[9] Mark Ciotola, Olivia Mah, A Colorful Demonstration of Thermal Refraction, arXiv, submitted on 21 May 2014.

« Development of Agriculture and Civilization | COURSE | Flows and Bubbles »